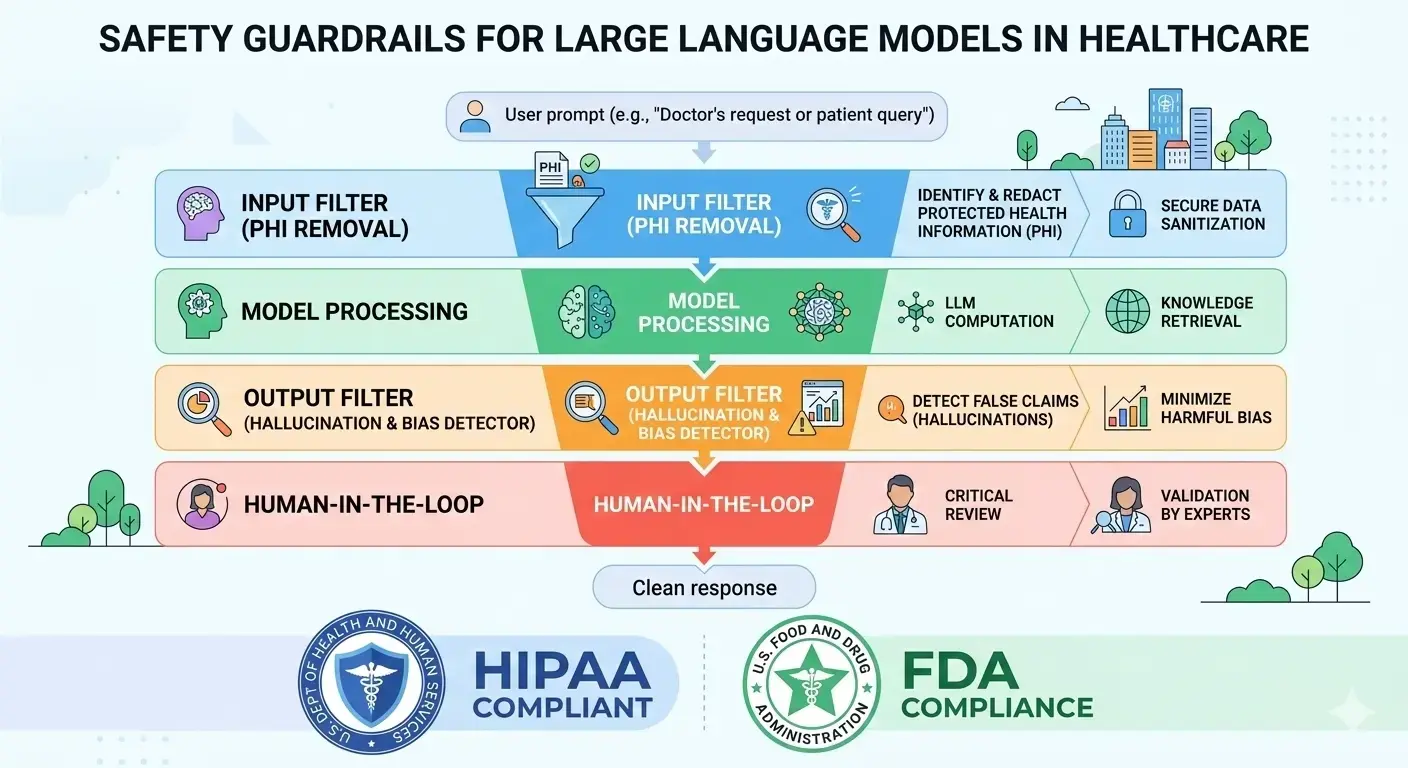

TL;DR: Large Language Models (LLMs) offer transformative potential for healthcare, but their deployment requires rigorous safety guardrails. This article outlines the essential technical, regulatory, and operational controls—including HIPAA compliance, input/output filtering, and human-in-the-loop verification—necessary to protect patient data and ensure clinical safety.

Large language models (LLMs) like ChatGPT and Gemini are opening up powerful new use cases in healthcare – from drafting clinical notes to automating patient triage and support. They promise to save time on documentation, improve patient engagement, and streamline operations. But healthcare is an inherently high-stakes domain. Unchecked LLM outputs can inadvertently leak patient data or even dispense unsafe medical advice. In fact, experts note that in healthcare “guardrails don’t just reduce reputational risk – they enforce legal compliance under HIPAA, FDA regulations, and clinical trial protocols”. Implementing strong safety guardrails (technical filters, process controls, and compliance checks) is essential to reap the benefits of AI while protecting patients and providers.

Healthcare LLM deployments must systematically address the risks of sensitive data and incorrect outputs. A 2025 review of AI-in-healthcare studies found that over a third of published projects failed to report any effective measures to protect patient health information (PHI). In practice, this means even something as simple as entering a patient’s name into a chatbot could violate HIPAA privacy rules. Remember: no consumer AI model is HIPAA-compliant out of the box. Any healthcare use of an LLM must build in data security and model controls (e.g. encryption, access control, auditing) from the ground up. At the same time, clinicians must remain aware of the models’ inherent tendency to “hallucinate” – confidently generating false or misleading medical information. Studies have shown that LLMs can produce inaccurate diagnoses or treatment suggestions if not carefully validated. Such errors in medicine can harm patients, erode trust, and violate ethical norms.

Regulatory Compliance Considerations

HIPAA and PHI Protection

In the United States, the Health Insurance Portability and Accountability Act (HIPAA) is the cornerstone of healthcare data privacy. HIPAA strictly regulates any “protected health information” (PHI) – from patient identifiers to diagnoses and treatment records. Under HIPAA’s Security and Privacy Rules, any system that handles PHI (including an LLM-based tool) must employ technical safeguards: data encryption, strict access controls, audit logs, and breach notification protocols. This also means signing a Business Associate Agreement (BAA) with any cloud/AI vendor that processes PHI on your behalf. As one industry expert quips, “if it’s free, you’re the product” – without a signed BAA with your AI provider, submitting PHI to that model is a compliance violation. For example, simply prompting an unsecured chatbot with a patient name or record number can expose PHI unless the system is HIPAA-safe.

Key HIPAA requirements for LLM use include: encryption of data in transit and at rest; pseudonymizing or removing patient identifiers before sending data to the model; and ensuring the model is never trained on real PHI. In practice, this means you should intercept and filter prompts to strip out names, dates of birth, addresses, medical record numbers, etc. Any output containing PHI should be similarly redacted. Every access and response must be logged for auditing. In short, treat the LLM like any other secure medical system: it must comply with HIPAA’s Privacy and Security Rules.

FDA and Medical Device Regulation

HIPAA covers data, but what if your LLM actually makes clinical recommendations? In the U.S., the FDA treats software that provides patient-specific medical advice or analysis as a medical device (Software as a Medical Device, or SaMD). An LLM that interprets symptoms, suggests diagnoses, or recommends treatments could fall under FDA oversight. Startups building AI tools for diagnosis or treatment should assume they may need FDA clearance or approval before commercialization. To date, the FDA has cleared hundreds of AI-based medical devices (mostly in imaging), but notably none of the cleared products use a dynamically updating LLM – all approved algorithms are “locked” and cannot change their logic on-the-fly. The FDA has explicitly acknowledged the challenge of regulating adaptive AI models, and is forming guidance: it has convened a Digital Health Advisory Committee and issued new rules (as of late 2023) to demand transparency and risk management in health IT, including AI systems.

Practically, this means early in development you must ask: “What is the LLM’s intended use?” If it provides any patient care guidance, plan for it as a SaMD. Document your clinical validation strategy, risk analysis, and change control plan in line with FDA’s Good Machine Learning Practice (GMLP) recommendations. Work with legal experts to determine whether your use case qualifies as a medical device; if so, budget time for regulatory submissions. The FDA and even overseas regulators (MHRA in the UK, EU authorities) are rapidly updating AI rules, so staying informed of the latest guidance is part of your guardrails strategy.

GDPR and Other Data Protection Laws

If your AI app handles data from Europe or other jurisdictions, international privacy laws also apply. The EU’s General Data Protection Regulation (GDPR) places health data in the special “sensitive” category and requires explicit patient consent or other justifications for processing. GDPR mandates data minimization and pseudonymization wherever possible, and it grants patients rights to access, correct, or erase their data. Many US states (California’s CCPA/CPRA, Virginia’s CDPA, etc.) have their own consumer privacy laws that may touch healthcare data. A best practice is to perform a Data Protection Impact Assessment (DPIA) if you’re using patient data with AI, and to build in data subject rights (e.g. processes to delete a patient’s data on request). In short, assume that whichever region you operate in, the highest privacy standard wins. De-identify or tokenize data if at all possible before it reaches the model, and ensure data residency rules (e.g. EU data staying in EU) are followed.

Common Use Cases and Risks

LLMs can be applied across a wide range of healthcare scenarios, but each brings its own safety considerations. For instance:

- Clinical Documentation: Many hospitals use LLMs to draft or summarize physician notes, discharge summaries, and patient charts. This can dramatically cut clerical work, but the model must be supervised. Guardrails here include filtering out PHI in the draft and allowing doctors to edit and approve all AI-generated text. An LLM should never create an official medical document without human review.

- Patient-Facing Chatbots: AI chatbots can triage patient symptoms, answer FAQs, or guide users through care instructions. While convenient, these bots must strictly avoid diagnosing conditions or prescribing treatments without clinician oversight. For example, an input rule can catch queries like “Please diagnose my symptoms” or “What medication should I take?” and block them. Instead, the bot might only offer general information or prompt the user to see a doctor. All chatbot interactions should be logged and monitored for problematic queries.

- Clinical Decision Support: In research or internal use, LLMs might suggest diagnostic possibilities or evidence-based treatments. However, any output must be clearly flagged as advisory. Avoid presenting AI suggestions as definitive medical advice. Model outputs in this use case should be traceable (with references or source data) so clinicians can verify them. If the LLM is integrated into a diagnostic workflow (like suggesting lab tests or interpreting results), it may trigger FDA SaMD requirements.

- Administration & Coding: LLMs are also used to automate non-clinical tasks, like coding diagnoses for billing, drafting policy documents, or summarizing insurance claims. These applications can be lower risk, but data privacy still matters. Ensure LLMs don’t inadvertently populate PHI into the wrong records, and have humans audit critical outputs (e.g. billing codes) for accuracy.

- Medical Research and Education: LLMs can help researchers by summarizing literature or even drafting drafts of papers or trial protocols. Here the risk is mainly accuracy and intellectual property. Models should cite sources when possible, and outputs should be checked by domain experts.

Across all these cases, the common thread is oversight and verification. No LLM in healthcare should run unattended. Always plan for a human-in-the-loop and a clear escalation process if something goes wrong.

Technical Guardrails

Technically, building a safety layer around the LLM is critical. In practice, this means inserting filters and detectors before and after the model’s output. For example:

- Input filters: Scan the user’s prompt for sensitive content or disallowed requests. Use pattern-matching (regular expressions) or more advanced classifiers to detect PHI (names, social security numbers, MRNs) or dangerous instructions. For instance, a rule might catch phrases like “show me patient records for John Doe” or “diagnose”/“treatment” requests and refuse them. NVIDIA’s NeMo Guardrails provides a useful blueprint: it describes input guardrails that intercept unsafe queries before they reach the LLM.

- Output filters: Analyze the model’s response for compliance. Even if a prompt is safe, the LLM might generate disallowed content. Use keywords or classifiers to detect and block any attempt by the AI to give medical advice, reveal PHI, or violate policy. An output block can stop an LLM from naming a patient or detailing a secret test result. If a response is blocked, return a safe error message (e.g. “I’m sorry, I cannot help with that request.”). It’s also valuable to log the reason for any block – this makes your guardrails auditable, which is important for compliance reviews and debugging.

- Deployment controls: Only use models in environments that meet healthcare security standards. Never put raw PHI into a public chat interface. Instead, use enterprise-grade APIs or self-hosted models. For instance, Google’s Vertex AI and Azure’s OpenAI Service allow you to sign HIPAA-compliant BAAs, do not use your data for model training, and support encryption. Self-hosting an open-source LLM on your own secure servers gives maximum control, but requires you to implement the entire HIPAA stack yourself.

- Encryption and key management: Encrypt all PHI end-to-end. Use TLS for data in transit and strong encryption for data at rest. If you store logs or AI transcripts, they must be encrypted and access-controlled. Use healthcare-cloud-compliant systems to avoid accidentally storing PHI on consumer systems.

- Version control and monitoring: Track which model version is deployed and who updated it. Maintain an audit trail of each AI decision pathway. Log every prompt, response, and any guardrail activation so that if an issue arises, you can trace what happened. Continuously monitor the model’s performance on key metrics: hallucination rates, bias, response time, and user feedback. Set up alerts so that abnormal behavior triggers human review.

By implementing these technical guardrails, you treat AI safety as an engineering problem, not just a vague goal. In the words of a safety-driven approach: rather than an opaque “safety filter,” build AI guardrails as configurable infrastructure. This means you can test rules, visualize how often they fire, and adjust them over time.

Operational and Ethical Safeguards

Technology alone isn’t enough – organizational processes and ethics are equally important. Consider the following best practices:

- Human-in-the-loop: Always require that a qualified clinician reviews any AI-generated clinical recommendation before acting on it. Use LLMs to augment human expertise, not replace it. For example, if the AI suggests a treatment, a doctor should double-check it against established guidelines. Document who reviewed the AI output and what changes were made.

- Training and awareness: Educate all users (doctors, nurses, staff) about the capabilities and limits of the LLM system. Make sure they understand it should not be blindly trusted, and train them on how to recognize potential errors. Include protocols for what to do if the model’s response seems wrong or out of scope.

- Ethical guidelines: Adopt an internal policy on AI ethics. This might include principles like fairness (the AI should not systematically under-serve any patient group), transparency (users should be told when they are interacting with an AI), and accountability (assign a compliance officer to oversee the AI use). Maintain documentation of your AI risk assessments, design decisions, and mitigation plans. In case something goes wrong, have an incident response plan to correct errors and notify affected parties as required by law.

- Bias mitigation: Regularly audit your LLM for biased or unfair outputs. For healthcare, this might involve testing whether the model gives equitable advice across different ages, genders, ethnicities, etc. If the LLM was fine-tuned on medical data, ensure that training data was diverse. Apply fairness filters or re-weighting techniques if any bias is detected.

- Accountability and traceability: When an LLM is used in care, someone must be accountable. Define clear roles: developers should build safe models, deployers must validate them, and clinicians/patients must understand that AI is advisory, not authoritative. Keep detailed logs of decisions so you can trace each recommendation back to its origin. This “explainability” – knowing why the AI said something – is crucial for compliance reviews.

By combining technical controls with solid governance, healthcare organizations can treat LLMs as “enterprise-ready AI” whose safety is measurable and auditable. It transforms AI safety from an afterthought into a core part of the product lifecycle.

Putting It All Together

In summary, deploying LLMs in healthcare demands a multi-layered guardrail strategy:

- Legal understanding: Map out all applicable laws (HIPAA, FDA, GDPR, state privacy laws) and build your system to meet them.

- Risk assessment: At project start, identify what kind of PHI or medical advice the model will handle. Classify your use case: is it merely documentation, or is it influencing patient care?

- Data practices: Minimize PHI in prompts, tokenize or encrypt it, and use only secured, authorized data pipelines.

- Model and prompt controls: Design your prompts carefully, and implement keyword/pattern filters to block out-of-scope queries. Use “safe-completion” modes where the model rejects or escalates requests it isn’t allowed to handle.

- Review and monitoring: Never let the AI run unchecked. Establish human review workflows and continuous monitoring of AI outputs.

- Documentation: Record every decision – from design documents to change logs and audit trails. Good documentation not only helps in regulatory audits but also in debugging if something goes awry.

In practice, startups and SMEs building healthcare AI often partner with specialized agencies to navigate this complexity. An experienced AI provider will help translate your healthcare idea into an architecture that satisfies regulators while delivering value.

Safety is a journey, not a checkbox. By layering technical safeguards with ethical processes and legal compliance, healthcare innovators can confidently use LLMs for everything from better patient communication to more efficient care – without cutting corners on privacy or accuracy.

Interested in building safe, compliant LLM-based healthcare tools? Contact AxcelerateAI to discuss how we can help implement these safety guardrails in your project. Together, we can accelerate your AI-driven healthcare innovation – safely and responsibly.

Ready to transform your business?

Let's discuss how our bespoke AI solutions can automate your workflows and drive unprecedented growth.