AS-One: Unified

Computer Vision

Framework

AS-One is a Python wrapper that unifies detection, tracking, segmentation, OCR, and pose estimation into a single, easy-to-use interface — enabling developers to swap models with minimal code changes.

What is AS-One?

Developed by Augmented Startups and maintained by AxcelerateAI, AS-One allows developers to experiment with modern computer vision models using standardised APIs. Instead of integrating different frameworks, pipelines, and dependencies for each model, AS-One provides one unified interface — switch from YOLOv5 to YOLOv9, or from ByteTrack to DeepSORT, with a single flag change.

Object Detection

YOLO v5–v9, YOLOX, YOLO-NAS with PyTorch, ONNX, CoreML

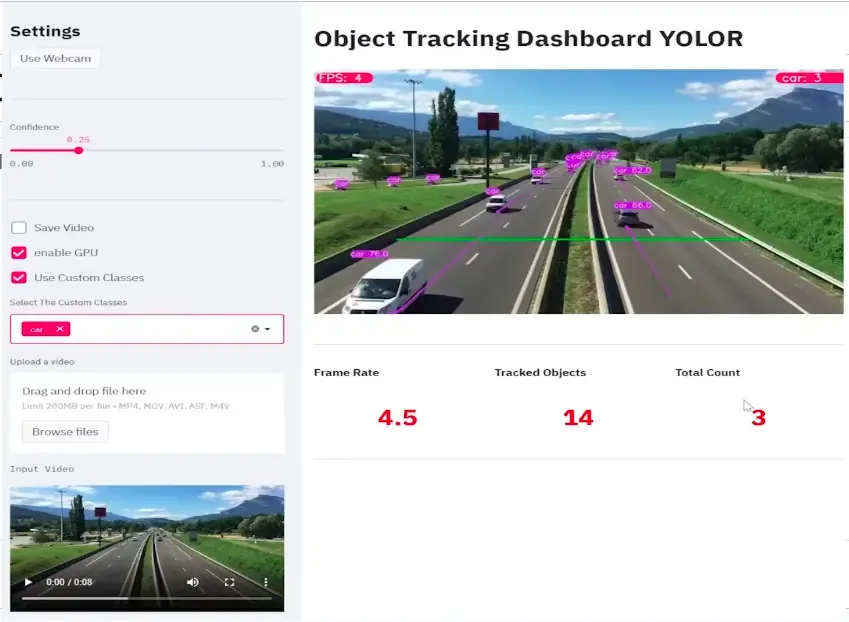

Object Tracking

ByteTrack, DeepSORT, NorFair, StrongSORT, OCSORT, MoTPy

Segmentation

SAM (Segment Anything Model) integration with YOLO detection

Text Detection & OCR

CRAFT text detection with EasyOCR recognition + tracking

Pose Estimation

YOLOv7-w6-pose, YOLOv8m-pose — keypoint detection & visualization

Edge & Mobile

CoreML support for M1/M2 Apple Silicon, mobile-optimized inference

Get Started in Minutes

Install AS-One with pip, or build from source for Windows support and custom environments.

pip install asoneThis installs AS-One and all dependencies. For GPU support, also install the correct PyTorch version for your CUDA version.

Run Your First Detection in 5 Lines

AS-One's unified API means you spend time on your application logic, not on framework integration boilerplate.

quick_start.pyimport asone

from asone import ASOne

# Instantiate with tracker + detector

model = ASOne(

tracker=asone.BYTETRACK,

detector=asone.YOLOV9_C,

use_cuda=True # set False for CPU

)

# Track vehicles in a video

tracks = model.video_tracker(

'data/sample_videos/test.mp4',

filter_classes=['car', 'truck']

)

for model_output in tracks:

# Draw annotations on each frame

annotations = ASOne.draw(model_output, display=True)

# model_output also contains bboxes, ids, classnames, scoresSwitch tracker or detector by changing a single flag — no other code changes required. See all supported flags in the Usage section below.

All Capabilities, One API

Every capability follows the same instantiation pattern. Swap flags to change models with zero structural changes to your code.

Run Object Detection

import asone

from asone import ASOne

# Initialize detector (GPU)

model = ASOne(detector=asone.YOLOV9_C, use_cuda=True)

vid = model.read_video('data/sample_videos/test.mp4')

for img in vid:

detection = model.detecter(img)

annotations = ASOne.draw(detection, img=img, display=True)

# detection contains: bboxes, class_ids, scores, class_namesCustom Trained Weights

# Use your own fine-tuned weights

model = ASOne(

detector=asone.YOLOV9_C,

weights='data/custom_weights/my_model.pt',

use_cuda=True

)

for img in vid:

detection = model.detecter(img)

annotations = ASOne.draw(

detection, img=img, display=True,

class_names=['license_plate', 'vehicle']

)Switch Models

# Change detector with one flag

model = ASOne(detector=asone.YOLOX_S_PYTORCH, use_cuda=True)

# Apple Silicon (M1/M2) — CoreML models

model = ASOne(detector=asone.YOLOV8L_MLMODEL) # no GPU needed

model = ASOne(detector=asone.YOLOV5X_MLMODEL)

model = ASOne(detector=asone.YOLOV7_MLMODEL)Run from Terminal

# GPU

python -m asone.demo_detector data/sample_videos/test.mp4

# CPU

python -m asone.demo_detector data/sample_videos/test.mp4 --cpuEverything in One Library

AS-One supports the most widely-used models in the detection, tracking, and segmentation ecosystem — and is continuously updated as new architectures are released.

- YOLOv5 (PyTorch, ONNX)

- YOLOv7 (PyTorch, ONNX)

- YOLOv8 (PyTorch, ONNX, CoreML)

- YOLOv9-C (PyTorch)

- YOLOX (PyTorch, ONNX)

- YOLO-NAS

- PP-YOLOE

- ByteTrack

- DeepSORT

- NorFair

- StrongSORT

- OC-SORT

- MoTPy

- SAM (Segment Anything)

- CRAFT (text detection)

- EasyOCR (recognition)

- YOLOv7-w6-pose

- YOLOv8m-pose

- YOLOv8l-pose

- ✅ YOLOv5 / v7 / v8 / v9

- ✅ YOLO-NAS

- ✅ SAM Integration

- ✅ Apple M1/M2 CoreML

- ✅ Pose Estimation

- ✅ OCR & Text Tracking

Need a Production

Computer Vision System?

AS-One helps you experiment fast. AxcelerateAI helps you scale to production — with custom models, edge deployment, and enterprise SLAs.